See your data clearly

Transform your business with brilliant visualization products, services and people

Certified Tableau Partner

Human-Centered Design Methodology

Whole Team Approach

Enjoy the Benefits of a Data-Driven Culture

Empower your team with expert tools, training, and server resources. Achieve your business goals more quickly and efficiently with Boulder Insight as your trusted advisor.

Services On Demand: Our Whole Team Approach

Define

Data

UI/UX

Build

Automate

Training

Client Success

Make friends with your data

Master your data with Ninja-level skills. Transform your business with the best tools, training, and resources from Boulder Insight.

Additional Products and Services

VIZbot

Automatically send interactive or static reports from your Tableau Server to any set of users, on any schedule.

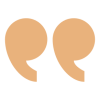

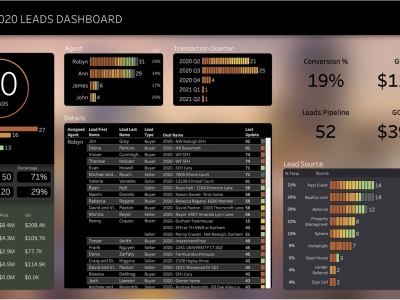

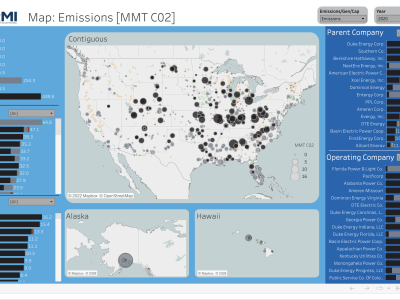

Tableau Templates

Download a suite of pre-formatted, Tableau dashboard layouts. Easily customizable to your organization’s brand and specific structure.

Tableau Server Guard

Use our expertise to maintain optimum performance and protection of your Tableau Server.

Training

Get Tableau Certified Training for any level of Tableau expertise ranging from beginner to advanced. We also offer custom curricula that uses your data.